Basic Objects

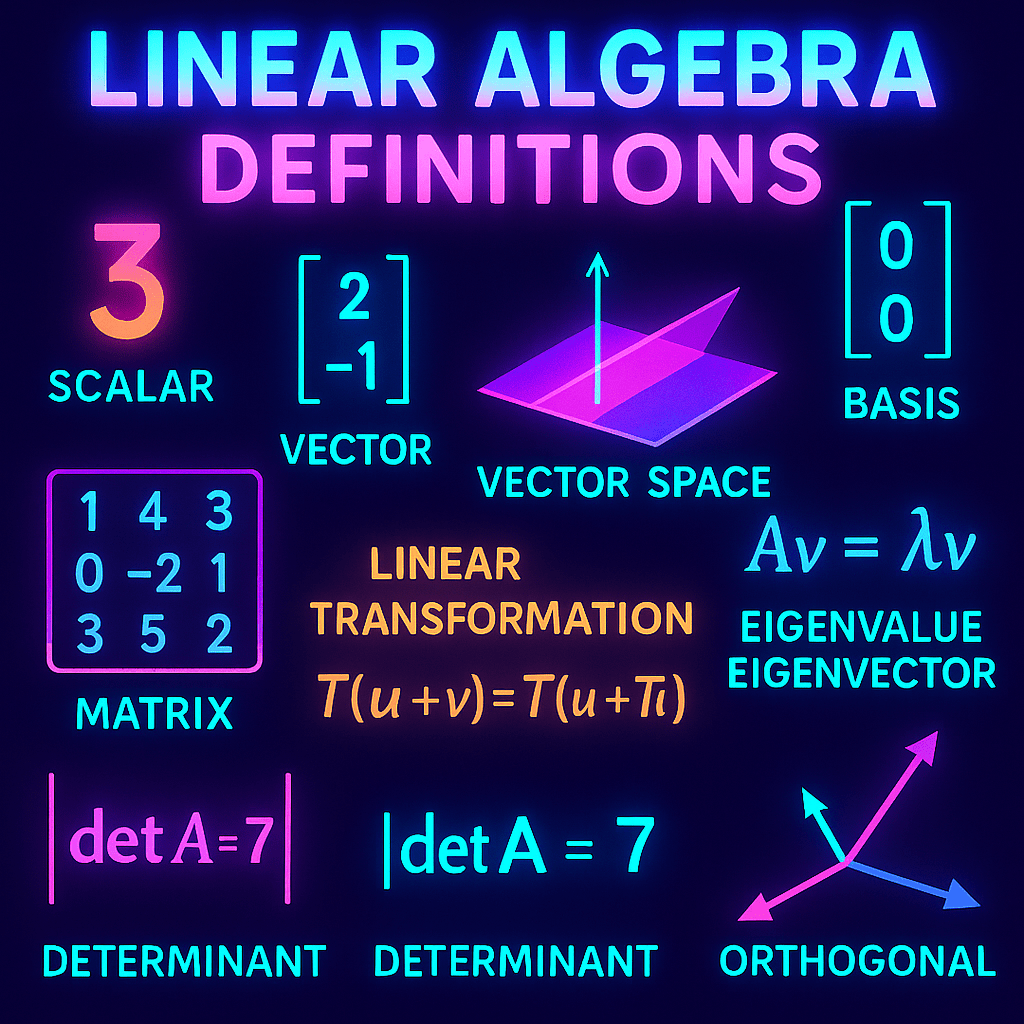

- Scalar: A single numerical value, typically from a field like ℝ or ℂ.

- Vector: An ordered list of numbers (components) that can be added and scaled.

- Vector Space: A set of vectors with two operations (vector addition and scalar multiplication) satisfying 10 specific axioms (e.g., associativity, distributivity).

- Subspace: A subset of a vector space that is also a vector space under the same operations.

- Zero Vector: The unique vector in every vector space such that v + 0 = v for all vectors v.

- Linear Combination: An expression formed by multiplying vectors by scalars and adding the results.

- Span: The set of all linear combinations of a given set of vectors.

- Linear Independence: A set of vectors is linearly independent if no vector in the set can be written as a linear combination of the others.

- Basis: A linearly independent set of vectors that spans the entire space.

- Dimension: The number of vectors in any basis of the space.

Matrices and Matrix Operations

- Matrix: A rectangular array of numbers arranged in rows and columns.

- Square Matrix: A matrix with the same number of rows and columns.

- Row Vector: A 1×n matrix.

- Column Vector: An n×1 matrix.

- Matrix Addition: Combining matrices of the same size by adding corresponding entries.

- Scalar Multiplication (Matrix): Multiplying every entry of a matrix by the same scalar.

- Matrix Multiplication: Combining an m×n matrix with an n×p matrix to form an m×p matrix.

- Identity Matrix (Iₙ): A square matrix with 1s on the diagonal and 0s elsewhere. Acts as a multiplicative identity.

- Transpose (Aᵗ): Flips a matrix over its diagonal: row i becomes column i.

- Inverse Matrix (A⁻¹): A square matrix A is invertible if there exists a matrix A⁻¹ such that AA⁻¹ = I.

Linear Transformations

- Linear Transformation (or Linear Map): A function T: V → W that preserves vector addition and scalar multiplication:

T(u + v) = T(u) + T(v)

T(cu) = cT(u) - Kernel (Null Space): The set of all vectors v such that T(v) = 0.

- Image (Range): The set of all outputs T(v) for v in V.

- One-to-One (Injective): A map T is injective if different inputs map to different outputs.

- Onto (Surjective): A map T is surjective if every element of the codomain is hit by some input.

- Isomorphism: A linear transformation that is both one-to-one and onto (i.e., bijective).

- Matrix Representation of T: The matrix that implements a linear transformation with respect to specific bases.

Rank and Nullity

- Rank: The dimension of the image (column space) of a matrix.

- Nullity: The dimension of the kernel (null space) of a matrix.

- Rank–Nullity Theorem: For a matrix A with n columns: rank(A) + nullity(A) = n

Determinants

- Determinant: A scalar value associated with a square matrix that provides information about invertibility, volume scaling, and orientation.

- Cofactor: The signed minor of a matrix element, used in determinant expansion.

- Minor: The determinant of a smaller matrix formed by deleting one row and one column.

- Singular Matrix: A matrix with determinant zero; not invertible.

- Nonsingular Matrix: A matrix with a nonzero determinant; invertible.

Eigenvalues and Eigenvectors

- Eigenvector: A nonzero vector v such that Av = λv for some scalar λ.

- Eigenvalue: The scalar λ in the equation Av = λv.

- Eigenspace: The set of all eigenvectors corresponding to a particular eigenvalue, plus the zero vector.

- Characteristic Polynomial: The polynomial det(A − λI) used to find eigenvalues.

- Algebraic Multiplicity: The number of times an eigenvalue appears as a root of the characteristic polynomial.

- Geometric Multiplicity: The dimension of the eigenspace associated with an eigenvalue.

- Diagonalizable Matrix: A matrix A is diagonalizable if there exists an invertible matrix P such that P⁻¹AP is diagonal.

Orthogonality and Inner Product Spaces

- Inner Product: A generalization of the dot product: a function ⟨u, v⟩ satisfying positivity, symmetry, and linearity.

- Norm (‖v‖): The length of a vector:

‖v‖ = √⟨v, v⟩ - Orthogonal Vectors: Vectors u and v are orthogonal if ⟨u, v⟩ = 0.

- Orthonormal Set: A set of vectors that are both orthogonal and of unit length.

- Projection: The orthogonal projection of a vector onto a subspace.

- Gram–Schmidt Process: A method to convert a linearly independent set into an orthonormal set.

- Orthogonal Complement: The set of all vectors orthogonal to every vector in a given subspace.

Advanced Concepts

- Singular Value Decomposition (SVD): A factorization A = UΣVᵗ, where U and V are orthogonal and Σ is diagonal with nonnegative entries.

- Jordan Form: A block diagonal matrix similar to a square matrix over ℂ, used in generalized diagonalization.

- Minimal Polynomial: The smallest monic polynomial m(x) such that m(A) = 0 for a square matrix A.

- Cayley–Hamilton Theorem: Every square matrix satisfies its own characteristic polynomial.

- Spectral Theorem: A real symmetric matrix can be orthogonally diagonalized.