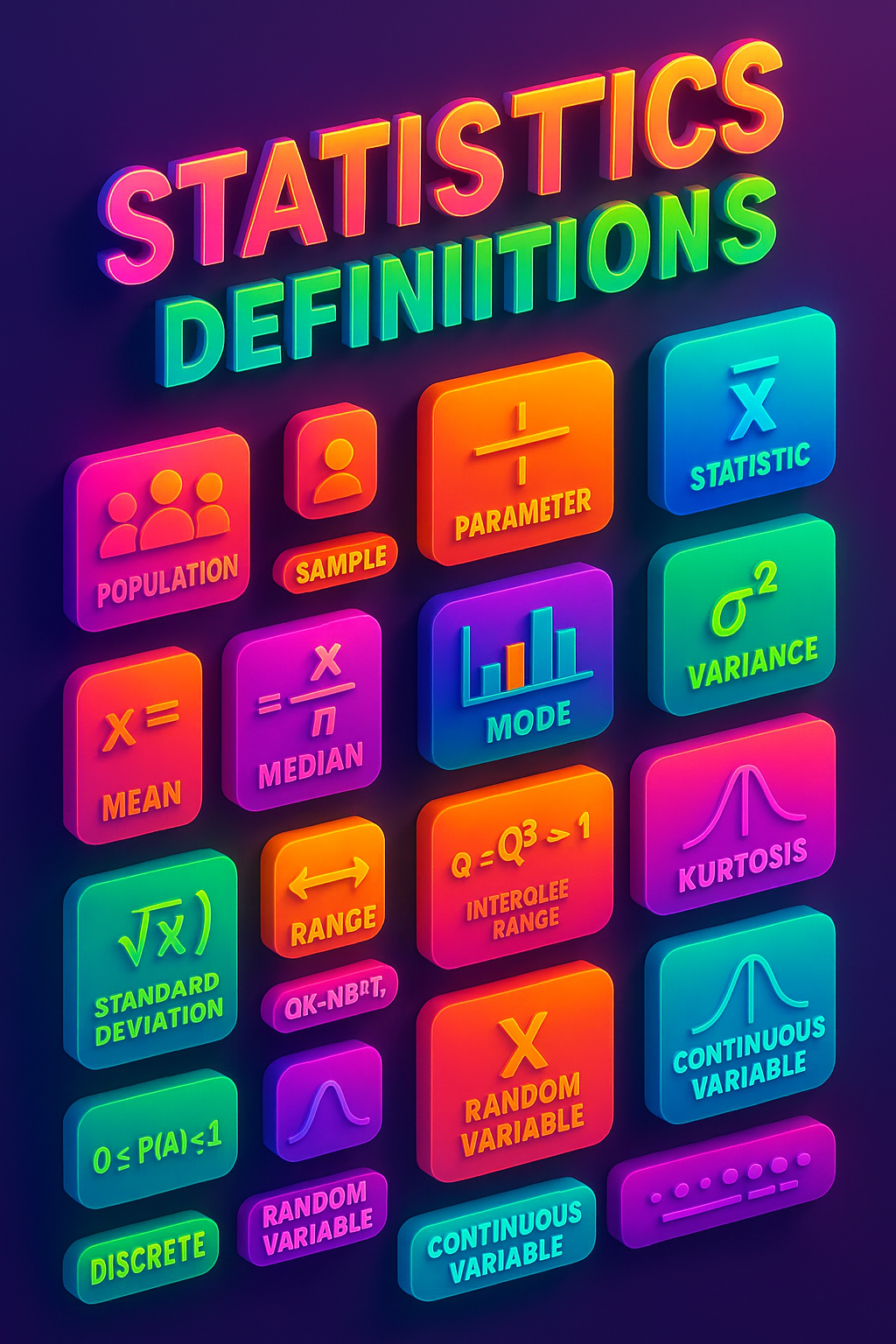

- Population: The entire group of individuals or items that you want to study or draw conclusions about.

- Sample: A subset of the population, selected for analysis.

- Parameter: A numerical value that describes a characteristic of a population, such as the population mean (μ) or variance (σ²).

- Statistic: A numerical value calculated from a sample, such as the sample mean (x̄) or sample variance (s²).

- Mean (Arithmetic Mean): The sum of all values divided by the number of values.

- Median: The middle value when data is sorted; if even number of values, the average of the two middle ones.

- Mode: The most frequently occurring value in a data set.

- Variance: The average of the squared differences from the mean; measures data spread.

- Standard Deviation: The square root of the variance; represents typical distance from the mean.

- Range: The difference between the maximum and minimum values.

- Interquartile Range (IQR): The difference between the third quartile (Q3) and the first quartile (Q1); measures middle 50% spread.

- Skewness: A measure of asymmetry in the distribution of data.

- Kurtosis: A measure of the “tailedness” of the distribution.

- Probability: A number between 0 and 1 representing the likelihood of an event occurring.

- Random Variable: A variable that takes on values based on the outcome of a random event.

- Discrete Variable: A variable that can take on a finite or countable number of values.

- Continuous Variable: A variable that can take on any value within an interval.

- Probability Distribution: A function that describes the likelihood of each outcome for a random variable.

- Probability Mass Function (PMF): Gives probabilities for discrete random variables.

- Probability Density Function (PDF): Describes the relative likelihood of a continuous random variable.

- Cumulative Distribution Function (CDF): Gives the probability that a random variable is less than or equal to a given value.

- Expected Value (Mean): The long-run average value of repetitions of the experiment it represents.

- Moment: A quantitative measure related to the shape of a distribution (e.g., mean is the first moment).

- Central Moment: A moment calculated about the mean (e.g., variance is the second central moment).

- Estimator: A rule or formula for calculating an estimate of a population parameter based on sample data.

- Estimate: A specific numerical value obtained from applying an estimator to a sample.

- Bias (of an Estimator): The difference between the expected value of the estimator and the true parameter value.

- Unbiased Estimator: An estimator whose expected value equals the true parameter value.

- Efficiency: An estimator is more efficient if it has lower variance among unbiased estimators.

- Consistency: An estimator is consistent if it converges to the true value as sample size increases.

- Sufficiency: A statistic is sufficient if it captures all the information in the data about a parameter.

- Confidence Interval: A range of values, derived from the sample, that is likely to contain the population parameter.

- Confidence Level: The proportion of confidence intervals, constructed from repeated samples, that would contain the parameter.

- Hypothesis Testing: A formal procedure for testing a claim about a population parameter.

- Null Hypothesis (H₀): The default assumption or claim to be tested.

- Alternative Hypothesis (H₁): The rival claim, tested against the null hypothesis.

- p-value: The probability of observing the test statistic or something more extreme under the null hypothesis.

- Significance Level (α): The threshold below which the null hypothesis is rejected (commonly 0.05).

- Type I Error: Rejecting the null hypothesis when it is actually true.

- Type II Error: Failing to reject the null hypothesis when it is actually false.

- Power (of a Test): The probability of correctly rejecting a false null hypothesis.

- Test Statistic: A function of the sample data used to decide whether to reject H₀.

- T-distribution: A distribution used for inference when population variance is unknown and sample size is small.

- Chi-square Distribution: Used in tests for variance and for categorical data (e.g., goodness-of-fit).

- F-distribution: Used in analysis of variance (ANOVA) and regression testing.

- Correlation: A measure of linear relationship between two variables (ranges from –1 to 1).

- Covariance: A measure of how two variables change together; not standardized like correlation.

- Regression: A method for modeling the relationship between a dependent variable and one or more independent variables.

- Simple Linear Regression: Regression with one independent variable.

- Multiple Linear Regression: Regression with two or more independent variables.

- Residual: The difference between an observed value and the value predicted by a model.

- Homoscedasticity: The assumption that residuals have constant variance.

- Heteroscedasticity: When residuals have non-constant variance.

- Multicollinearity: A situation in regression when independent variables are highly correlated with each other.

- Bootstrap: A resampling method used to estimate the distribution of a statistic by sampling with replacement.

- Permutation Test: A nonparametric method to test hypotheses by rearranging labels in the data.

- Bayesian Inference: Statistical inference that updates beliefs about a parameter using Bayes’ Theorem.

- Prior Distribution: In Bayesian analysis, the distribution expressing beliefs about a parameter before seeing the data.

- Posterior Distribution: The updated distribution of a parameter after observing data.

- Likelihood Function: A function of the parameter given the data, used for estimation (e.g., in Maximum Likelihood Estimation).

- Maximum Likelihood Estimation (MLE): A method of estimating parameters by maximizing the likelihood function.

- Sampling Distribution: The probability distribution of a statistic over all possible samples.

- Degrees of Freedom: The number of values in a calculation that are free to vary.

- Outlier: A value far from the center of the data; may indicate variability or data issues.

- Z-score: The number of standard deviations a data point is from the mean.

- Normal Distribution: A symmetric, bell-shaped distribution that arises frequently in statistics.

- Standard Normal Distribution: A normal distribution with mean 0 and standard deviation 1.

- Uniform Distribution: A distribution where all outcomes are equally likely over an interval.

- Exponential Distribution: A distribution used to model time between independent events.

- Poisson Distribution: A discrete distribution used to model the count of events in a fixed interval.

- Binomial Distribution: A discrete distribution describing the number of successes in a fixed number of independent trials.

- Bernoulli Distribution: The simplest discrete distribution, with only two outcomes: success (1) or failure (0).

- Geometric Distribution: Describes the number of trials until the first success.

- Hypergeometric Distribution: Describes successes in a sample drawn without replacement.

Statistics Definitions

Discover more from SodakAI: Bespoke AI Solutions

Subscribe to get the latest posts sent to your email.